It is written for voters, journalists, students, and local officials who need clear, sourced steps to produce transparent district summaries without overstating what the data prove.

What ‘district’ means for demographic analysis

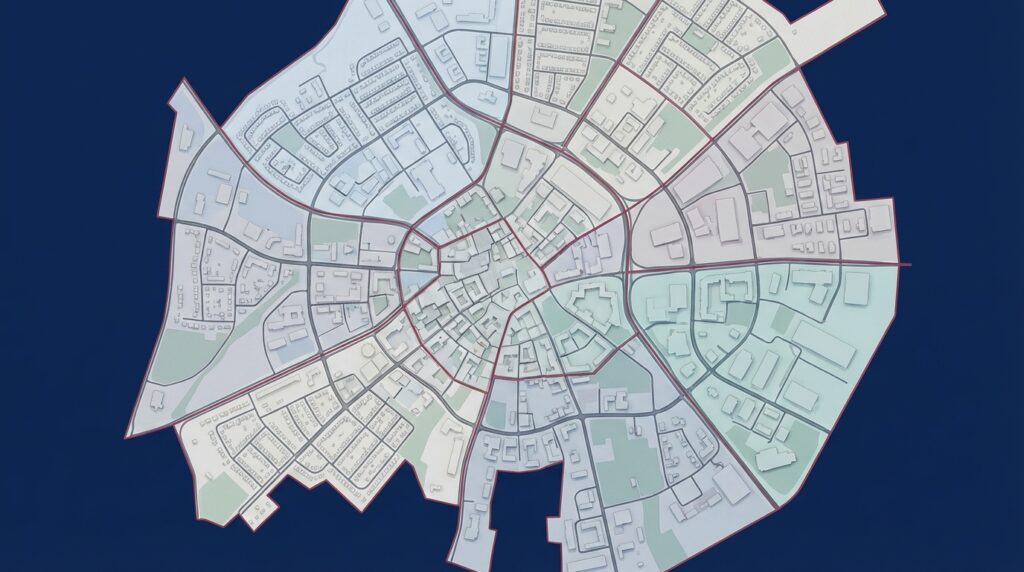

The word district names the specific legal electoral boundary you are analyzing, not a county or a school catchment. In practice, a district can cut across tracts and blocks, so analysts must treat the district as the analytic unit and document its exact footprint.

Start any district-level work by noting the boundary file version and its date. Using the wrong footprint changes population totals and can bias comparisons, so clear documentation is the first defense against mistakes; for guidance on available geographic products see Census TIGER/Line shapefiles TIGER/Line shapefiles.

Check your sources before reporting district findings

Consult primary geography and ACS pages before drawing firm conclusions, and record the exact boundary files and estimate years you use.

Note that redistricting cycles since 2020 may have moved census tracts or blocks between districts. Record whether your analysis uses pre- or post-redistricting boundaries so readers can interpret changes over time. Precise geography matters before any demographic aggregation.

Why the American Community Survey is the standard starting point for district work

The American Community Survey provides multi-topic demographic estimates between decennial censuses and is usually the baseline source for district work. It covers income, age, race and ethnicity, education, housing, and other topics that are useful for public-facing summaries, according to the Census documentation About the American Community Survey.

Because ACS is sample-based, analysts should record the table IDs and the years used, and avoid treating single estimates as definitive. For many small geographies the five-year ACS product balances topical breadth with improved sample size.

Comparing ACS 1-year and 5-year estimates and reporting margins of error

Margin of error reflects sampling uncertainty and must accompany any reported estimate. Write margins of error into figure captions and text, and avoid claiming precise change when ranges overlap. Plain phrasing helps: say estimates “suggest” a direction and show the range rather than making an absolute claim.

District-level data can describe aggregate characteristics and suggest trends, but it cannot prove individual behavior or causal relationships without stronger designs; use ACS five-year estimates as a baseline, validate with administrative records when available, and always report margins of error and mapping choices.

When margins of error are large, add qualifying language and consider whether aggregation to a larger geography or using five-year estimates reduces uncertainty. Document the choices and explain the implications for interpretation.

Using administrative records for education and student counts

Administrative education records, such as NCES EDGE and state enrollment files, provide near-complete counts for students and are recommended to verify or replace ACS estimates for school-age populations where available; for the program description see NCES EDGE EDGE: Education Demographic and Geographic Estimates.

When using administrative counts, check that the reporting period and definitions match the question you are asking. Enrollment counts often follow school years, which may not align with ACS estimate years, and some local files use grade-level or residency definitions that differ from ACS categories.

Mapping and aligning geographies: TIGER/Line and district boundary files

Misaligned geographies produce biased aggregations and misleading comparisons when you sum tract or block values into a district. Use official shapefiles and a clear spatial-join method to avoid these errors; the Census TIGER/Line pages summarize available products TIGER/Line shapefiles.

Practical checks include verifying coordinate reference systems, deciding whether to clip or to weight partial overlaps, and recording the method used. Keep a short note describing spatial joins, any interpolation, and the shapefile versions so others can reproduce the steps.

For context about local candidates, use neutral primary sources. For example, the campaign site states biographical priorities and platform points that can be cited when providing voter-facing candidate profiles. Keep candidate mentions light and clearly attributed.

Why aggregate data does not prove individual behavior: the ecological fallacy

The ecological fallacy occurs when analysts infer individual behavior from aggregate statistics. Aggregate shifts in a district do not prove individual-level changes, so avoid statements that attribute motives or actions to residents based solely on district totals; this limitation is described in foundational ecological inference literature A Solution to the Ecological Inference Problem.

When a question requires individual-level inference, consider designs that use surveys or voter files, and treat any ecological inference model outputs as conditional on strong assumptions. Always describe those assumptions and validate results where possible.

Small-area estimation techniques and how to report uncertainty

Small-area estimation can improve precision for small populations, but it does not eliminate uncertainty. Use modeling only when you can document inputs and run sensitivity checks; see general guidance on using ACS data for local analysis Guide: How to use American Community Survey data for local analysis.

When you choose a model, report the model type, the covariates used, and how margins of error or credible intervals were calculated. Provide simple sensitivity checks that show how results change with different assumptions, and include that information in public outputs.

A quick checklist to verify small-area estimation choices

Use sensitivity checks to show robustness

Avoid presenting modeled point estimates without ranges. Use phrases like “model-based estimates suggest” and show how sensitive findings are to reasonable alternative inputs, so readers can judge robustness for themselves.

A practical checklist for replicable district demographic analysis

Follow a clear step-by-step workflow to keep analyses defensible. Start by defining the district boundary and recording the shapefile version. Then select ACS tables and years, noting table IDs and whether you used one- or five-year estimates; the ACS overview is a helpful reference About the American Community Survey.

Next, check margins of error, consider administrative records for specific populations, align geographies with TIGER/Line shapefiles, and document any interpolation or weighting. Finish by writing a short uncertainty statement and including links to primary sources in public reports.

Decision criteria: when to trust ACS estimates versus administrative counts

ACS provides broad multi-topic coverage and is the usual baseline when you need many variables. Administrative counts excel for near-complete tallies of specific populations, such as student enrollment, and are preferable when coverage and alignment are adequate; NCES EDGE explains the scope of administrative education data EDGE: Education Demographic and Geographic Estimates.

Ask whether administrative and ACS definitions match, whether the geographic footprint aligns, and whether reporting periods overlap. Prefer administrative counts for school-age population analysis when they are complete and mappable to your district, but document why you selected one source over another.

Typical errors and pitfalls to avoid in district demographic work

Common mapping errors include summing county or tract totals without checking overlap, which changes totals if parts of those geographies lie outside the district. Always test spatial joins and describe the method used, as the Census TIGER/Line resources make clear TIGER/Line shapefiles.

Avoid presenting point estimates without margins of error. Noisy estimates should be described as ranges and contextualized. Also guard against moving from aggregate change to claims about individual motives or behaviors without stronger supporting data.

Practical scenarios and examples for common district questions

Estimating a school-age population for planning typically starts with ACS 5-year tables for age cohorts, then compares those estimates with state or district enrollment files. If administrative counts differ substantially, document the reporting periods and any definitional differences before deciding which series to present; advice on combining ACS and administrative sources is available from local analysis guides Guide: How to use American Community Survey data for local analysis.

When comparing demographic change across adjacent districts, map both footprints carefully and show uncertainty ranges. If MOEs overlap, avoid strong claims about differential change. Present side-by-side ranges and a short note explaining the limits of inference.

Communicating uncertainty to voters and stakeholders

Use plain-language templates that pair estimates with ranges. For example, write “Estimates suggest the school-age population is about X, with a range from Y to Z,” and attribute the numbers to ACS or to a named administrative source without asserting certainty.

Recommend including a short uncertainty statement in public releases, and link to primary sources so readers can verify tables and methods. Clear phrasing builds trust by showing what the data do and do not prove.

Documenting data, methods, and reproducibility

Record minimum metadata: data source, table IDs, estimate years, shapefile version, coordinate system, and any weighting or interpolation used. These elements let another analyst reproduce or critique your choices and improve transparency.

When possible, share code snippets or a step list that shows data extraction, spatial joins, and aggregation. Note any privacy or licensing constraints, and include a short reproducibility note in public reports so readers understand what can be rerun and what cannot.

Summary: sensible standards for 2026 district demographic work

For 2026 public-facing district analysis, begin with ACS five-year estimates as the baseline and use administrative records to validate or replace ACS for some populations. Report margins of error and use official boundary files to avoid biased aggregations; these steps help produce defensible, transparent summaries, according to standard guidance on ACS and administrative sources About the American Community Survey.

Document every choice, present uncertainty rather than definitive causal claims, and provide primary-source links so readers can verify tables and methods. This conservative, transparent workflow supports clearer civic dialogue about demographic change.

For small districts, ACS five-year estimates are generally preferred because they pool data to improve reliability; always report margins of error alongside estimates.

Use administrative counts, such as school enrollment files, when they provide near-complete coverage for the population of interest and can be mapped to the district footprint.

Pair estimates with ranges or MOEs and use plain phrases like "estimates suggest" while linking to the ACS or administrative source so readers can check the tables.

Where possible, link directly to primary tables and include a short reproducibility note so readers can verify the underlying sources and methods.

References

- https://www.census.gov/geographies/mapping-files/time-series/geo/tiger-line-file.html

- https://www.census.gov/programs-surveys/acs/about.html

- https://www.census.gov/programs-surveys/acs/guidance/estimates.html

- https://nces.ed.gov/programs/edge/

- https://press.princeton.edu/books/paperback/9780691046671/a-solution-to-the-ecological-inference-problem

- https://www.urban.org/research/publication/guide-using-american-community-survey-data

- https://michaelcarbonara.com/contact/

- https://michaelcarbonara.com/republican-candidate-for-congress-michael-car/

- https://michaelcarbonara.com/news/

- https://michaelcarbonara.com/about/

- https://www.census.gov/programs-surveys/acs/technical-documentation/user-notes/2022-04.html

- https://pmc.ncbi.nlm.nih.gov/articles/PMC4232960/

- https://www.census.gov/data/developers/data-sets/acs-5year.html