This article explains the main data sources-NAEP for assessment comparisons and NCES ACGR for graduation rates-shows how composite rankings work, and highlights what the available data do and do not say about Florida's Parental Rights in Education law. It offers a short checklist readers can use to evaluate ranking claims and recommends primary sources to consult.

What people mean by ‘lowest state in education’ – definition and context

the parental rights in education bill florida

When readers ask which state is ranked lowest in education, they usually mean a state that scores lowest on some published list. Those lists use different inputs and give a single ordered outcome, but the meaning of ‘lowest’ depends on the chosen measures and the weight given to each measure. National assessment releases and graduation statistics are common inputs.

Direct assessment results and graduation figures often point to different states as relatively weaker or stronger, so a single label is rarely defensible without naming the ranking source and its method. NAEP provides an assessment-based comparison, while ACGR captures graduation outcomes at the federal level.

Stay informed with Michael Carbonara's campaign updates

Before accepting a headline, check primary sources such as NAEP releases and NCES graduation tables to see what the ranking actually measures.

Readers should know that composite indices take multiple indicators and convert them into one score. That produces a clear ranking, but it also layers judgment calls into the result: which indicators matter most, and how much do they matter? Identifying the underlying choices is the first step to interpreting any ‘lowest’ claim.

Clear naming helps: when someone cites a ‘lowest’ state, ask which ranking or dataset is meant and whether the comparison uses assessment scores, graduation rates, spending, or other inputs.

How state education rankings are constructed: common metrics and weighting

Most state rankings use a mix of indicators. Commonly included items are standardized test scores, graduation rates, per-pupil spending, teacher credentials, and measures of opportunity or student supports. Each input captures a different part of a state’s education system.

How those items are combined matters. Some lists weight test scores heavily, while others give more weight to finance and staffing. Missing or inconsistent data can also change outcomes, because analysts must decide how to handle gaps or proxies (see related research ERIC report).

Because composite rankings reflect method choices, two reputable lists can disagree about which state is lowest. Always look for a methodology section that explains indicator selection and weighting before treating a headline as definitive.

NAEP and what its 2024 results show about state differences

NAEP, the Nation’s Report Card, is the primary national assessment for comparing student achievement across states. It samples students and reports average scores in subjects such as reading and math, which supports direct assessment comparisons.

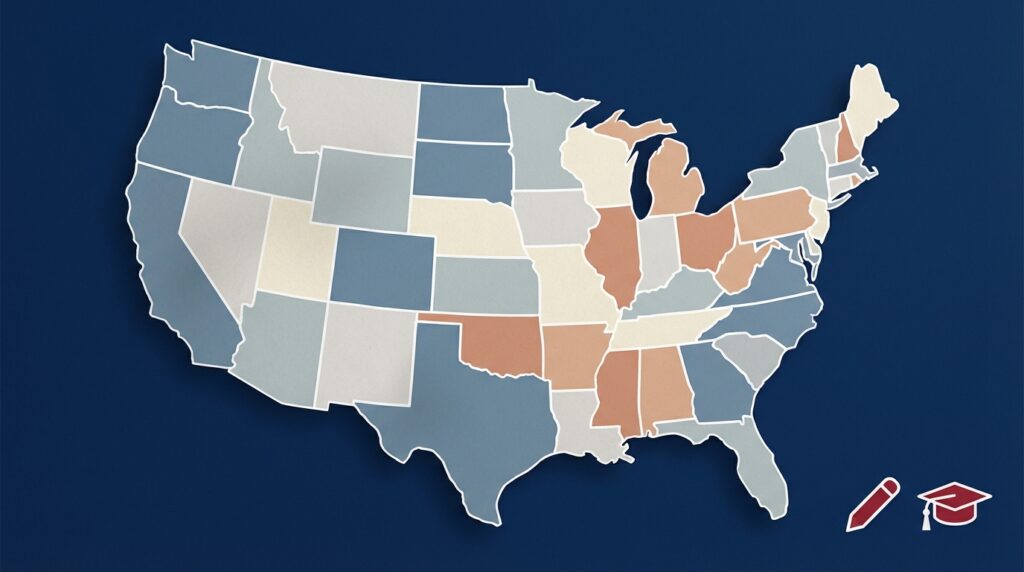

The NAEP 2024 releases show measurable state-by-state differences in student achievement, with lower average scores concentrated in several Southern states, a pattern visible in the national reporting The Nation’s Report Card, and analysis from the Manhattan Institute The Nation’s Report Card analysis.

There is no single definitive lowest state; the answer depends on the dataset and methodology cited, so always name the ranking source and check primary data from NAEP and NCES.

NAEP focuses on assessment outcomes and does not measure inputs such as spending or teacher pay. That makes it a strong tool for answering ‘how do students perform on a common assessment?’ but not for assigning blame or explaining causes behind score differences. State-level analyses, such as the Florida Department of Education’s NAEP review, provide additional context Florida NAEP analysis.

Readers should treat NAEP as a primary reference when they want to compare achievement, and then consult other sources for a fuller picture of resources and outcomes.

Graduation rates across states: interpreting ACGR data

The Adjusted Cohort Graduation Rate, or ACGR, is the federal measure for high-school graduation. It follows a cohort of students and reports the share who graduate within four years, making it a standard metric for interstate graduation comparisons.

ACGR and assessment-based rankings do not always point in the same direction, because graduation captures a different outcome than test scores. A state can have relatively low assessment averages but a middling graduation rate, or vice versa. Official graduation comparisons come from NCES and their indicator pages NCES ACGR indicator.

That difference means readers should check both NAEP and ACGR when evaluating claims about overall educational performance, and avoid equating one measure with total system quality.

Composite rankings and examples: how sites like WalletHub create a ‘worst state’ list

Composite rankings combine multiple indicators into a single score and then sort states from best to worst. Indicators can include achievement measures, graduation, funding, student-teacher ratios, and other resource or outcome metrics.

WalletHub’s 2024 composite ranking, which combined achievement, outcomes and resource indicators, identified Mississippi as the lowest-ranked state for education that year according to their published methodology WalletHub 2024 Best & Worst States for Education.

Composite lists are useful for drawing attention to systemic patterns, but they are one lens among several. If a headline cites a composite list, readers should quote the list and its method rather than repeating an unqualified ‘lowest state’ claim.

When reporters or voters cite a composite ranking, they should also note the publication date and whether the list includes recent assessment releases or spending updates.

Common mistakes and pitfalls when citing a ‘lowest’ state claim

A frequent mistake is mixing metrics. For example, treating a composite ranking as if it reflects only test scores, or citing a graduation-rate change as if it were an assessment result, confuses different measures.

Another pitfall is overstating causation. State-level changes in scores or graduation rates can follow many factors, and attributing them to a single law or event requires targeted, peer-reviewed research rather than headline comparisons.

Headlines can also oversimplify. A careful check: identify the ranking source, read the methodology, and consult primary data such as NAEP and NCES before accepting a definitive claim about the ‘lowest’ state.

How Florida’s Parental Rights in Education law appears in the discussion – what the data do and do not show

Florida’s Parental Rights in Education law, HB 1557, has been a focal point in national discussion about schooling priorities and curricula; the bill text and legislative record are available from the Florida Legislature HB 1557 – Parental Rights in Education. See related coverage on educational freedom educational freedom.

National datasets such as NAEP and ACGR document state-level outcomes, but they do not by themselves establish whether a single law caused observed changes in achievement or graduation rates. Demonstrating causal effects requires targeted empirical studies with controls for other factors.

Quick primary-data checks for readers

Use these sources to verify headline claims

In public debate, HB 1557 is often mentioned alongside performance questions, but linking a policy to an outcome at the state level demands peer-reviewed or otherwise rigorous analysis before drawing firm conclusions.

Practical checklist: how to evaluate a headline that names a state’s education rank

Step 1: Identify the ranking source. Is it NAEP, NCES ACGR, a composite list, or a media synthesis? Knowing the source clarifies what was measured.

Step 2: Read the methodology. Check which indicators were used and how they were weighted. That explains why two lists can disagree.

Step 3: Consult primary data. For assessment comparisons, check NAEP releases; for graduation comparisons, check NCES ACGR tables. These primary pages hold the raw indicators behind many summaries The Nation’s Report Card.

Step 4: Look for peer-reviewed analysis before accepting causal claims about laws or recent policy changes.

Examples and scenarios: interpreting two illustrative comparisons

Scenario A: A state shows lower average NAEP scores but has an ACGR near the national median. That pattern could reflect local policies on student supports, alternative diploma pathways, or differences in assessment preparation. NAEP would flag the assessment gap; ACGR would not necessarily follow the same pattern.

Scenario B: A state ranks low on a composite list because it combines low spending per pupil with below-average achievement and weak teacher metrics. The composite score emphasizes multiple vulnerabilities, which can drop a state toward the bottom even if it is not the single lowest on any one indicator. WalletHub’s composite approach is an example of this method WalletHub 2024 Best & Worst States for Education.

These scenarios show why citing the specific source is essential: the same state can appear differently depending on whether an analyst emphasizes achievements, outcomes, or resources.

Case study: what WalletHub’s 2024 result for Mississippi illustrates

WalletHub used a composite methodology combining achievement, outcomes, and resource indicators to produce a single ordered list and concluded that Mississippi ranked lowest in 2024 under that approach WalletHub 2024 Best & Worst States for Education.

That finding highlights how composite indices can surface states with multiple coordinated challenges, but it does not equate to a verdict on every measure. For example, NAEP assessment patterns and NCES graduation tables may tell a different story for specific outcomes.

Using a single composite example is useful for demonstrating method choices, but readers should avoid generalizing that result into a definitive statement about overall system quality without looking at other data.

How journalists and voters should cite and contextualize ranking claims

Best practice is to name the ranking source and methodology in the headline or the first paragraph. For example: ‘According to WalletHub’s 2024 composite ranking, Mississippi ranked lowest.’ That framing flags the source immediately. For author background, see the about page About.

Avoid implying that a single law or event explains the ranking unless there is rigorous study supporting causation. Instead, report what the ranking measures and advise readers about limits of interpretation.

Short attribution templates help maintain clarity: ‘According to [source] and its published methodology, [state] ranks X because [brief explanation].’ This keeps the claim tied to evidence rather than conjecture.

Where to find reliable, primary data and what to look for next

Primary sources for state comparisons are the NAEP site for assessment releases and the NCES pages for adjusted cohort graduation rates. These portals provide the underlying indicators used in many rankings NCES ACGR indicator.

Independent rankings such as WalletHub or U.S. News provide comparative context but require a close read of methodology sections to understand what each result means. Watching for peer-reviewed research that examines policy impacts, including studies that specifically address laws like HB 1557, is the way to move from correlation toward causal claims.

Keep an eye on updated NAEP releases and NCES publications for the latest assessment and graduation data; those are the best primary references when verifying ranking headlines. See recent coverage on our news page News.

Conclusion: a careful answer to ‘which state is ranked lowest in education?’

The straightforward answer is that there is no single, universal ‘lowest’ state. Which state appears lowest depends on the dataset and method: NAEP, NCES ACGR, and composite rankings can produce different orderings, so specify the source when repeating any ‘lowest’ claim.

WalletHub’s 2024 composite ranking identified Mississippi as lowest under its method, while NAEP and ACGR provide direct assessment and graduation measures that should also be consulted when making comparative statements WalletHub 2024 Best & Worst States for Education.

Florida’s HB 1557, the Parental Rights in Education law, is part of public discussion about priorities in schooling, but national assessment and graduation datasets alone do not demonstrate that the law produced changes in statewide outcomes; targeted research is needed before assigning causation HB 1557 – Parental Rights in Education.

Practical next steps: name your source, read its methods, check NAEP and NCES primary pages, and watch for rigorous studies that address policy impacts. That approach keeps reporting and civic discussion grounded in verifiable evidence.

No. Different datasets measure different things. NAEP compares assessment scores, NCES ACGR reports graduation rates, and composite lists combine multiple indicators; each can produce different rankings.

National assessment and graduation data do not by themselves establish causation; determining policy effects requires targeted, peer-reviewed studies that control for other factors.

Start with primary sources: NAEP releases for assessments and NCES ACGR pages for graduation rates, then read the methodology of any composite ranking cited.

Grounded reporting and careful citation will help voters and civic readers interpret state rankings responsibly.

References

- https://www.nationsreportcard.gov/

- https://nces.ed.gov/programs/coe/indicator_coi.asp

- https://wallethub.com/edu/best-states-for-education/

- https://michaelcarbonara.com/contact/

- https://www.flsenate.gov/Session/Bill/2022/1557/BillText/er/PDF

- https://files.eric.ed.gov/fulltext/ED612084.pdf

- https://manhattan.institute/article/the-nations-report-card-is-out-heres-what-the-results-tell-us-about-americas-schools

- https://www.fldoe.org/core/fileparse.php/13152/urlt/NAEPANALYSIS.pdf

- https://michaelcarbonara.com/issue/educational-freedom/

- https://michaelcarbonara.com/news/

- https://michaelcarbonara.com/about/